Artemio recently posted a detailed writeup on the 240p Test Suite’s Patreon page that details why the audio analysis from “modern retro” consoles might show significantly different readings than an original console: https://www.patreon.com/posts/nt-mini-and-when-34461897

I strongly recommend anyone interested read the post and hopefully sign up for the Patreon, but here’s a basic description of what’s happening: The HDMI spec is designed around TV signals and not the wide variety of signals produced by classic game consoles. In order for these “modern retro” consoles to be 100% compatible with all TV’s, the signal needs to be changed ever so slightly from it’s original refresh rate, causing it to be imperceptibly faster or slower.

So, what does this mean for gamers? NOTHING. You won’t notice an audio pitch difference and unless you run TAS’, it’s impossible to notice the video speed difference.

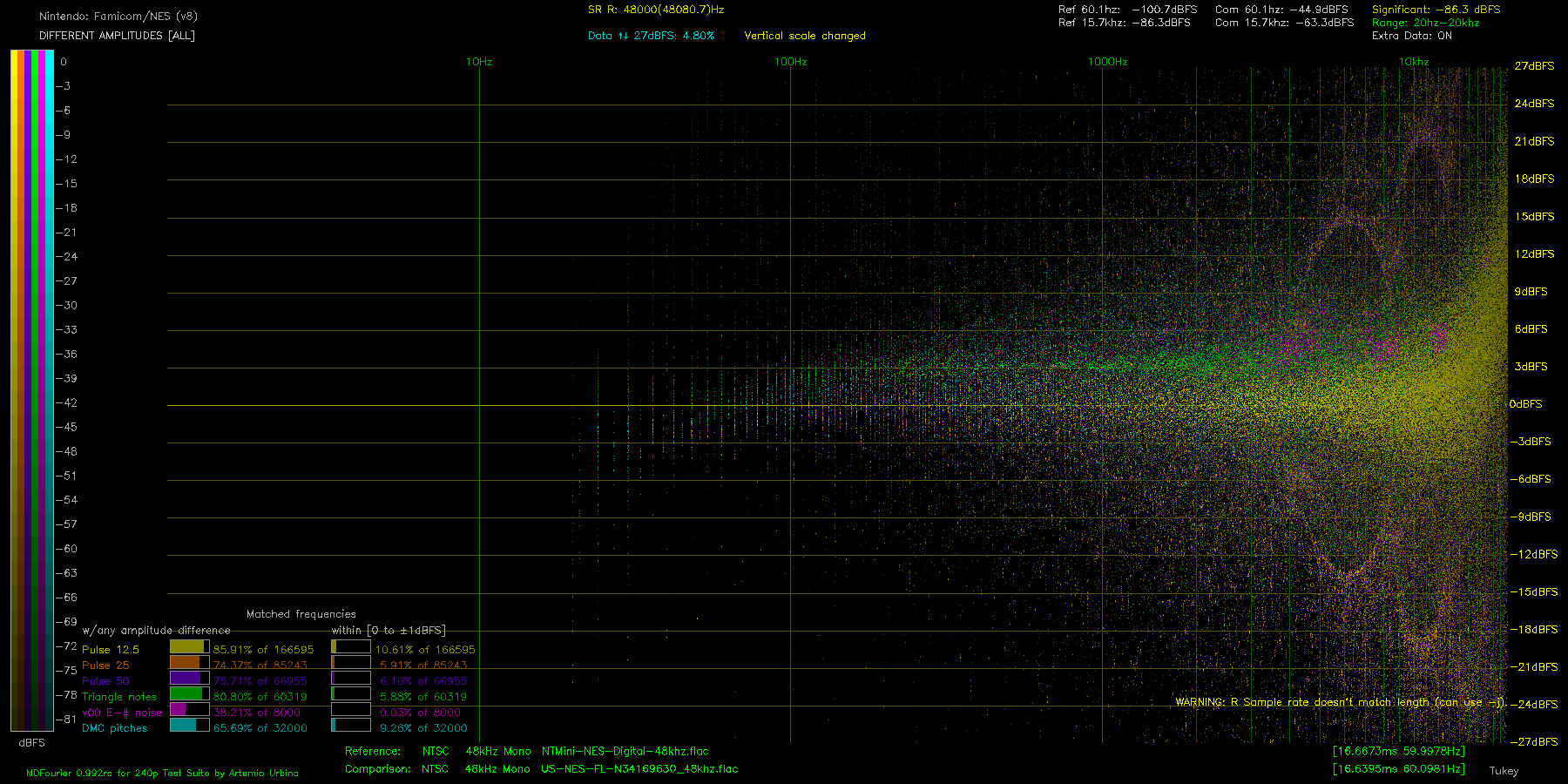

However, in-depth analysis tools like MD Fourier compare as much data as possible, highlighting any changes like this. Also, a pure digital-to-digital HDMI signal has a noise floor so low that it’s almost non-existent. Comparatively speaking, any analog audio output will have noise, so comparing that against a pure digital signal will also show the noise floor differences as well.

Overall, tools like MD Fourier are excellent and are allowing for the true audio preservation of all the consoles it’s been ported too. It’s just important we take a moment to understand their purpose and how to use them, as well as why some results might look “bad”, but are actually perfectly fine considering the use case.

Auto Amazon Links: No products found. http_request_failed: A valid URL was not provided. URL: https://ws-na.amazon-adsystem.com/widgets/q?SearchIndex=All&multipageStart=0&multipageCount=20&Operation=GetResults&Keywords=B0052URJKM|B079X3KF9D&InstanceId=0&TemplateId=MobileSearchResults&ServiceVersion=20070822&MarketPlace=US Cache: AAL_f339846917e88fc0067bd66af0573cf2